Last Updated: March 18, 2026

Most companies do not struggle to collect customer feedback. They struggle to collect feedback they can trust. A customer survey can be one of the most effective ways to understand what customers are experiencing, where things are falling short, and what needs attention first.

But when the questions are weak, the audience is off, or the analysis is superficial, the result is not insight. It is false confidence. That is why the real value of customer surveys depends less on sending them and more on designing them well.

At Interaction Metrics, we write surveys, deploy them, and conduct the analysis. You’ll have a turnkey solution with a Findings Report that separates the signal from the noise. Ask a Question.

Many companies operate under a loyalty illusion: the gap between how well a business thinks it is doing and how customers actually feel. That gap matters because customer loyalty is often more fragile than leaders assume. According to the PwC 2025 Customer Experience Survey, 52% of customers will walk away from a brand after just one bad experience.

Do Customer Surveys Work?

Customer surveys work when the sample, wording, timing, and analysis are handled carefully. They remain one of the most cost effective and scalable research methods because they collect structured feedback at a scale interviews usually cannot match.

But surveys are not automatically useful just because they are easy to launch. Online surveys can be deployed quickly, yet that same speed often leads to weak questionnaires, poor answer options, and superficial analysis. Surveys work best when they are part of a listening system, not a one-time task.

For many companies, the appeal is obvious. Surveys are relatively easy to implement, can reach large samples across products or touchpoints, and help track change over time. They can show how customers felt after a purchase, how easy support was to use, or whether a service interaction is strengthening loyalty.

What Bad Surveys Cost You

Bad surveys create blind spots—missed problems, wasted effort, and lost customers.

In this free guide, you’ll learn the five most common survey mistakes—and how to fix them.

You’ll see examples of better survey questions, proven ways to boost response rates, and how to turn survey data into insights your teams can actually use.

Get our Free Guide and stop bad data in its tracks.

What Do Customer Surveys Actually Measure?

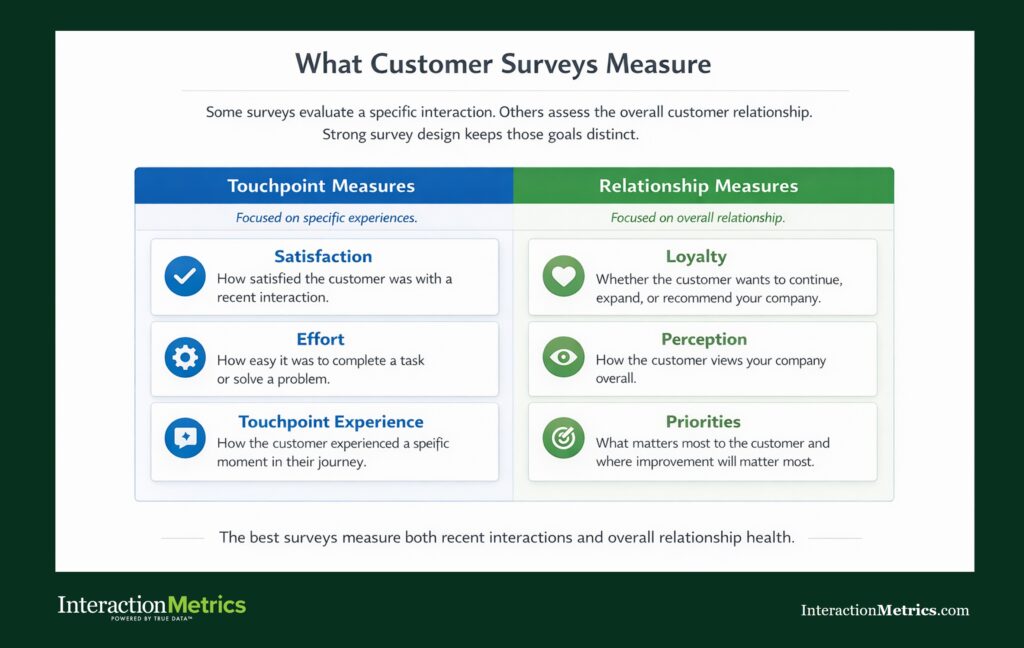

Companies survey their customers to measure their satisfaction, loyalty, effort, perception, priorities, and experience. A well-designed survey helps a company understand not just whether customers are happy, but which parts of the experience are shaping that result.

Surveys can evaluate a recent purchase, a support interaction, an account relationship, a delivery experience, or the overall quality of services. They also help distinguish between different kinds of feedback. Some surveys are built around a specific interaction, while others measure the broader relationship. That distinction matters because a customer may be satisfied with one touchpoint and frustrated with the business overall.

Strong surveys also combine quantitative and qualitative insight. Ratings make it possible to compare groups and track scores, while open-ended questions explain why respondents answered the way they did. That combination usually produces more value than a score alone.

Surveys also complement other research methods. They can reach many participants efficiently, while interviews and observational research provide more depth. Surveys do not capture body language or allow real-time probing, but they remain a practical way to gather structured data from large samples.

What Are the Main Advantages of Customer Surveys?

The main advantages of customer surveys are scale, speed, consistency, repeatability, and cost effectiveness. They allow companies to collect feedback from many respondents at once, which makes them especially useful for spotting patterns across a large customer base.

One major advantage is reach. Online surveys make it possible to gather customer feedback from large samples without turning every research project into a highly time consuming effort. Compared with interviews or manual outreach, surveys are one of the most efficient ways to gather input across products, locations, or services.

Another advantage is consistency. Using the same survey questions and answer options across respondents makes it easier to compare responses, analyze trends, and determine whether satisfaction is improving or slipping in specific areas.

Surveys also create a repeatable listening system. A company can run them after a purchase, after support, during onboarding, or at key points in the relationship. That makes it easier to track change over time instead of relying on a one-time snapshot.

They can also surface both expected and unexpected issues. A rating may confirm a known concern, while open text may reveal new suggestions, competitor mentions, or frustrations leadership did not anticipate. When findings are organized clearly, teams can prioritize resources and make decisions based on data instead of anecdote.

Why Are Customer Surveys Considered Cost Effective?

Customer surveys are considered cost effective because they can gather large amounts of feedback with fewer resources than many other research methods. They make it possible to reach many respondents without the scheduling and staffing demands that come with interviews or live qualitative research.

Still, cost effectiveness depends on design quality. A survey can be cheap to launch and expensive in its consequences. If the questions are weak, the target audience is wrong, or the answer options distort the results, the business may act on inaccurate data.

That is why survey design matters more than cheap deployment. The value of customer surveys does not come from low distribution cost alone. It comes from collecting data that helps a company decide what to fix, what to improve, and what to protect.

What Are the Disadvantages of Customer Surveys?

The biggest problem with surveys is that they happen after the fact, and customers simply don’t remember you that well or think about you that much. That’s where other methods like interviews, real-time observations and customer service evaluations come in. So when it comes to the customer experience, the best approach is always multi-methodological.

Most customer surveys (but not Interaction Metrics surveys) suffer from the following deficits: biased questions, limited depth, low engagement, and weak interpretation. Surveys can produce misleading answers when they are rushed or treated like templates instead of research tools.

One problem is poor wording. Leading, vague, or double-barreled survey questions can push respondents toward answers that do not reflect their true experience. Even small wording changes can affect how people interpret the question.

Another risk is sampling bias. If the wrong customers receive the survey, or if only certain types of customers respond, the results may not represent the full customer base. This is how response bias can weaken customer feedback data and make satisfaction look better or worse than it really is.

Survey fatigue is another challenge. When companies send too many surveys or ask too many questions, respondents disengage. Response rates drop, attention falls, and answers become rushed.

Surveys also have limits in depth. They can reveal patterns, but they do not always explain the full story behind those patterns. A rating may show that satisfaction dropped, but not the emotional nuance behind it. Surveys are strong for breadth, but not always enough for depth.

A final disadvantage is superficial analysis. Many companies collect responses and stop at averages or dashboards. They never fully analyze what is driving the score, which segments are most affected, or what themes appear repeatedly in written feedback.

Why Do Customer Surveys Sometimes Fail?

Poor design, poor sampling, weak analysis, and unclear purpose distort the findings of customer surveys. Surveys fail when companies focus on sending them rather than on building a valid research process.

A common failure point is generic design. Some surveys ask broad questions that sound polished but do not help determine anything actionable. Others use internal language that does not match how customers think about the experience. When the wording is vague or irrelevant, the answers do not lead to useful decisions.

Timing is another issue. A good survey needs a clear audience and a clear purpose. A relationship survey should not be treated like a transaction survey, and a post-purchase survey should not ask about parts of the journey the respondent did not experience.

Surveys also fail when companies rely too heavily on a single number. A net promoter score or satisfaction score can be useful, but a score without context can mislead management. It tells you where to look, not automatically what caused the problem.

Internal influence can weaken results too. If the team being evaluated controls the survey process, respondents may be less candid, and the organization may unintentionally shape the survey around a desired outcome.

Finally, surveys fail when the organization does not act on the feedback. Customers notice when companies ask for suggestions but never communicate any change.

What Does Good Customer Survey Design Look Like?

Good customer survey design is specific, unbiased, concise, and tied to a clear decision. Strong design produces better responses because it respects what customers can realistically evaluate and what the business actually needs to learn.

A well-designed survey starts with scope. Before writing survey questions, a company should determine what decision the survey is meant to inform. Is the goal to measure customer satisfaction after a purchase, evaluate support services, compare competitors, or understand the health of the relationship? That decision shapes the questionnaire, timing, and participant list.

Good design also starts with the customer’s point of view. The survey should use language customers recognize, ask about experiences they actually had, and avoid forcing them to guess or interpret vague concepts.

Neutral wording is essential. Strong questions are direct, balanced, and easy to answer. Strong answer options matter too. If the options are uneven, incomplete, or confusing, they can reduce accuracy even when the question itself is well written.

Good design also keeps the process manageable. A concise survey usually performs better than a long one because it is easier to complete thoughtfully. Each question should earn its place.

The best surveys balance structure and explanation. Quantitative questions make it possible to track and compare results, while open ended questions add depth by letting respondents explain their answers in their own words.

| Common Pitfall | The Impact | The Effective Design Fix |

|---|---|---|

| Double-Barreled Questions (e.g., “Was our staff helpful and polite?”) | Confuses the respondent. If the staff was helpful but rude, they don’t know how to answer. | Isolate Concepts. Ask separate questions: “Was our staff helpful?” and “Was our staff polite?” |

| Leading or Biased Wording (e.g., “How great was your support experience?”) | Pushes respondents toward positive answers, creating false confidence. | Use Neutral Wording. Ask, “How would you rate your support experience?” with a balanced scale. |

| Overly Complex Answer Scales (e.g., a 1–20 scale with vague descriptions) | Increases cognitive load, leading to inconsistent and rushed responses. | Use Clear, Standardized Scales. Stick to 5- or 7-point Likert scales or 0–10 NPS scales with clear labels. |

| Superficial Analysis (Stopping at averages or dashboards) | Fails to identify what is actually driving the score, leading to stagnated improvement. | Deep-Dive Segmentation. Analyze open-ended feedback and segment data by customer type, issue, or location. |

How Can Customer Feedback Surveys Produce Better Data?

Customer feedback surveys produce better data when they are tied to a real experience, sent to the right respondents, and followed by serious analysis. Better customer feedback data comes from disciplined design, not from simply sending more surveys.

One way to improve quality is to focus each survey on a clear moment or relationship. Customers give better answers when the survey reflects something specific, such as a purchase, onboarding process, delivery experience, account interaction, or support event.

Another way is to design for honesty. Respondents are more likely to give accurate feedback when surveys feel neutral, manageable, and credible. If the survey feels like a marketing device or a score-protection exercise, the answers may be less candid.

Better data also comes from better analysis. Companies should not stop at totals or averages. They should analyze written feedback, compare segments, identify recurring themes, and determine which issues are most likely to influence satisfaction or loyalty.

What Is the Role of Net Promoter Score in Customer Surveys?

Net promoter score is a loyalty metric, not a full explanation of the customer experience. It can help track recommendation intent, but it should not be treated as a complete measure of satisfaction or relationship health.

The strength of net promoter score is simplicity. It gives companies a common benchmark and an easy way to track a score over time. Its weakness is that it does not explain the reasons behind the score unless the survey includes supporting questions and written feedback.

Strong customer surveys treat net promoter score as a starting point, not an ending point. The score may signal that something changed, but the surrounding questions help determine what changed and what should happen next.

While NPS is a vital high-level indicator, relying on it alone can leave significant blind spots in your data. To get a complete picture of the customer journey, expert-designed programs often layer in specific tactical metrics. Understanding when to use Customer Satisfaction (CSAT) for individual touchpoints or Customer Effort Score (CES) to identify friction allows you to move beyond a single score and into actionable territory.

| Metric | What It Measures | When (and When Not) to Use It |

|---|---|---|

| Net Promoter Score (NPS) | Overall loyalty and recommendation intent. | Use for broad relationship benchmarking. Don’t use as the sole metric or a complete measure of satisfaction. |

| Customer Satisfaction (CSAT) | Satisfaction with a specific touchpoint. | Use after specific interactions like a support call or delivery. Don’t use to measure long-term relationship health. |

| Customer Effort Score (CES) | The ease of interacting with your business. | Use to find friction points in high-effort moments like onboarding or check-out. Don’t use to measure overall sentiment. |

| Open-Ended Feedback (Qualitative) | The “why” and emotional sentiment behind the scores. | Use always. Mandatory for understanding root causes and capturing unique suggestions. Don’t ignore. |

By selecting the right metric for the right moment, you ensure that your survey program provides the depth needed to drive real operational change.

Are Online Surveys Still Useful?

Online surveys are still useful because they remain one of the most practical and scalable ways to gather customer feedback from many respondents. For many companies, they are the easiest way to conduct structured research across products, geographies, and services.

Their usefulness, however, depends on design and implementation. Online surveys can be launched quickly, but fast deployment does not guarantee valid data. If companies use generic questionnaires, poor answer options, or unclear targeting, online surveys can produce inaccurate data just as easily as they can produce insight.

Their biggest advantage is access. They allow companies to reach many customers efficiently, collect structured responses, and track trends over time. The risk is that ease of use can encourage weak planning.

Are Customer Surveys Worth it for B2B Companies?

Customer surveys are worth it for B2B companies when they are designed to reflect the complexity of the relationship. In B2B environments, surveys help companies identify friction, protect high-value accounts, and uncover risks before they lead to churn.

This matters because B2B growth depends heavily on retention and expansion within existing accounts. As Daniel Hawkyard, Director Analyst at Gartner, notes, “In today’s competitive market, retaining and expanding relationships with current customers is not just a priority—it’s essential for sustainable growth.” For B2B firms, surveys help reveal where the relationship is strong, where it is vulnerable, and where more value can be created.

B2B surveys are often more strategic than simple post-transaction feedback surveys. They can reveal where friction exists in the relationship, how different services affect the account, and what issues may lead to churn or competitor interest in the future.

Because each respondent may represent meaningful revenue, the quality of the survey process matters even more. Sample sizes may be smaller than in consumer research, but the value of each response is often much greater.

The biggest difference between B2C and B2B surveys is that B2C programs usually track many transactions, while B2B surveys are built to protect and grow high-value relationships. Because each B2B respondent may represent significant revenue, the survey must be more precise, more strategic, and more closely tied to account decisions.

| Feature | B2C (Consumer) Model | B2B (Business) Model |

|---|---|---|

| Primary Focus | Transactionality: Volume, speed, and standard benchmarks. | Strategy: Relationship longevity, key accounts, and risk mitigation. |

| Journey Complexity | Often shorter, linear, and focused on single-touchpoint events. | Longer, cyclical, multi-touchpoint journeys with multiple stakeholders. |

| Respondent Value | Comparatively low on an individual basis (often single purchases). | Extremely high. A single response may represent a multi-million dollar account. |

| Key Metrics | CSAT, standard NPS benchmarks. | Detailed account health metrics, competitive insights, relationship stability. |

When Should A Company Use A Customer Survey Company Instead Of Software Alone?

A company should use a customer survey company instead of software alone when it needs trusted findings, not just collected responses. Software can send surveys, but software alone does not ensure strong design, reduce bias, or analyze results in a way that supports business decisions.

A survey company can help determine the right audience, create better questionnaires, improve answer quality, and analyze customer feedback data with more rigor. That is especially important when the survey will influence management strategy, service changes, or account decisions.

This is where many companies underestimate the difference between deployment and research. Sending online surveys is a task. Designing a valid feedback process is a discipline.

If you want customer feedback that leads to better decisions instead of false confidence, Interaction Metrics can help. We design and manage customer surveys that give you a clearer picture of what customers are experiencing, so you can act on the results with confidence.

Ready for statistically honest customer surveys? That’s where Interaction Metrics comes in. We design and deploy surveys that give you data you can trust. Get in touch.