TrueData™ SURVEYS

Survey Data Collection Methods

A Guide to Email, Interviews, Timing, and Response Quality

B2B Survey Experts ﹡ Third-Party Objectivity ﹡ Software Included

We bring certified analysts, proven methods, and everything you need. No licensing costs, no learning curve.

How you collect survey data shapes what data you get. The channel, timing, invitation strategy, anonymity conditions, and follow-up rules all influence who responds, how honestly they respond, and whether your findings reflect the actual customer relationship or just reflect the collection process itself.

In B2B and high-value relationships, this matters more than in consumer programs. A generic, over-automated survey sent to a strategic account at the wrong moment doesn’t just reduce response rates — it signals that you’re not paying attention to the relationship you’re trying to measure.

At Interaction Metrics, we write surveys, manage data collection, and analyze the results. You’ll have an end-to-end solution with a Findings Report that helps you act with confidence. Book a TrueData™ Demo.

Data collection usually reveals whether a survey program is conventional or science-first.

The conventional approach is to use whatever fielding method the platform makes easiest: a broad email deployment, default reminder timing, and the contact list already on hand. What you get back is often skewed: some contacts respond from multiple devices without realizing it, the wrong person at an account takes the survey, certain segments consistently over-respond while others go quiet. The platform won’t flag any of that.

A science-first approach treats collection as a methodology decision: which channel fits this audience, what timing produces honest rather than reactive responses, who specifically should be invited and why, and what needs to be cleaned before analysis begins. The goal isn’t to collect responses — it’s to collect the right responses from the right people under conditions that support honest answers.

Survey Data Collection: At-a-Glance

| Principle | Conventional Survey Approach | Versus Our Scientific Survey Approach | Science-First Outcomes |

|---|---|---|---|

| Collection Mode | Use the channel that is easiest to launch, regardless of whether it fits the audience or the decision the study needs to support. | Choose the collection mode based on audience, context, sensitivity, and the type of feedback the study is meant to capture. | Better respondent fit and data that is more candid, relevant, and useful. |

| Survey Timing | Send surveys based on operational convenience or platform defaults. | Set timing based on what the survey is trying to measure and when respondents can give the most meaningful answer. | Results that reflect the experience more accurately instead of capturing a rushed or stale reaction. |

| Invitation Strategy | Use generic invitations, default subject lines, and broad outreach to the full list. | Tailor invitations, sender identity, and outreach strategy to the audience, relationship, and survey purpose. | Stronger data quality and higher response rate. |

| Anonymity | Assume respondents will answer honestly if the survey simply says “confidential.” | Design collection conditions to support candor, including realistic confidentiality language, independent administration when needed, and clearer separation from the relationship team. | More honest feedback and less distortion from politeness bias. |

| Reminder Logic | Send reminders automatically on a fixed schedule to everyone who has not responded. | Use reminders selectively based on respondent importance, relationship sensitivity, and whether additional responses are still needed. | Higher response quality without making the process feel automated or careless. |

| In-Field Data Quality | Collect responses and deal with duplicates, partials, and out-of-scope respondents later. | Define in-field quality rules upfront for duplicates, incomplete responses, disengaged responding, and respondent eligibility. | A cleaner dataset and findings that are more defensible before analysis even begins. |

| B2B Access | Send surveys to the available contacts and accept whoever happens to respond. | Deliberately choose the right stakeholders, manage account-level sensitivities, and remove internal bias from who gets invited. | Results that better reflect the real customer relationship, especially in high-value accounts. |

What Is Survey Data Collection and Why Does Mode Matter?

Survey data collection is the process of reaching a defined population through a chosen channel, at a chosen time, under conditions that shape who responds, how candidly they respond, and how useful the results will be.

Mode matters because context changes answers. The same question asked by phone, by email, or on an intercept survey can produce systematically different responses — not because the question changed, but because the circumstances did. A respondent who fills out an email survey at their desk on Tuesday morning is in a different frame of mind than one who answers a pop-up survey immediately after a frustrating support call. Both answers are real. They’re not measuring the same thing.

A strong survey can still produce weak data if the collection method does not fit the audience or the decision the study is meant to support.

The Main Survey Data Collection Methods — and When to Use Each

Email Surveys

Email is the most common B2B survey mode for good reason. It’s scalable, works with existing CRM and contact lists, lets respondents answer on their own schedule, and supports the kind of relationship surveys that B2B programs typically need. For periodic measurement, annual relationship surveys, and most post-interaction programs, email is the right starting point.

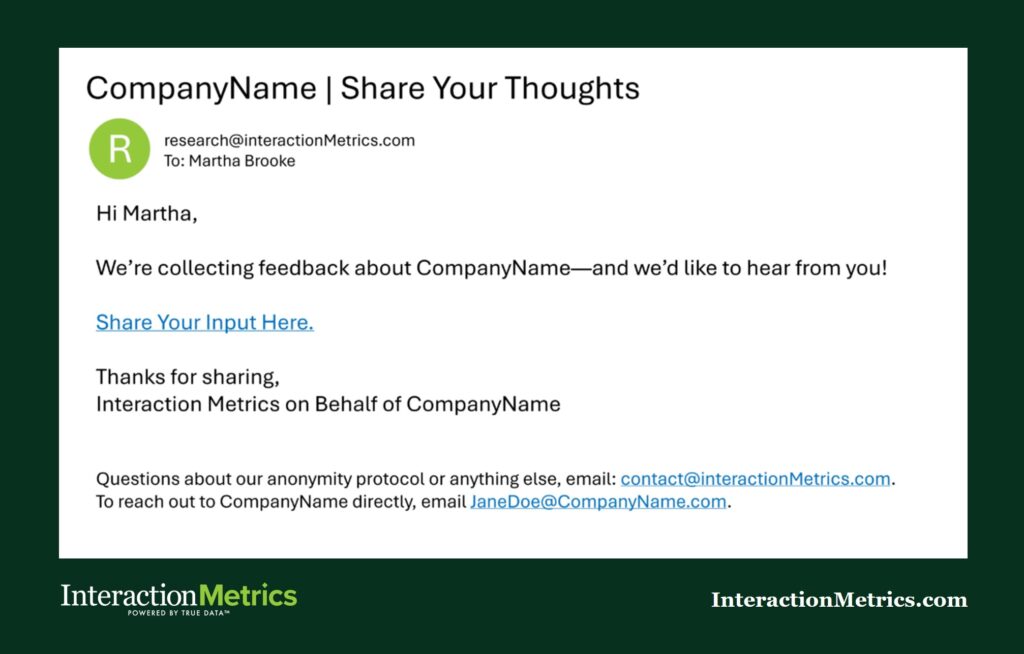

One factor that gets underestimated: who the email comes from changes how it’s received. A survey sent from your own domain reads as a vendor asking for a favor. The same survey sent by a neutral third party reads as independent research — and respondents answer more candidly when they don’t feel like the feedback goes directly back to the person they’re trying to be diplomatic with. When the stakes are high and honest data matters more than operational convenience, third-party administration is worth considering.

Email is the wrong choice when the contact list is degraded, when key contacts are already over-surveyed, or when the population is too small for respondents to believe anonymity is real.

A third-party research firm like Interaction Metrics can evaluate list quality, reduce internal bias, and give respondents more confidence that their feedback will be handled independently.

Phone Surveys and Live Interviews

Phone produces richer data than email, particularly for open-ended exploration, senior contacts who won’t fill out a form, and situations where follow-up questions matter. The distinction between a structured phone survey — standardized questions, quantifiable output — and a qualitative interview — exploratory, conversational, dialogue-driven — is important. They serve different purposes and require different administration.

The tradeoff is social desirability risk. Respondents speaking live with anyone connected to your organization are more likely to soften criticism, hedge on sensitive topics, and give the answer that preserves the relationship rather than the one that reflects their experience. Third-party interviewing reduces this: an independent interviewer removes the relational pressure that distorts answers, and respondents know it.

Online Intercept and Website Surveys

Intercept surveys trigger off behavior — a page visit, a completed transaction, a support interaction that just closed. The contextual relevance is high; you’re catching the respondent while the experience is fresh. For transactional measurement, this timing advantage is real.

The limitation is sample bias. Intercepts only reach active users. Churned customers, disengaged accounts, and contacts who’ve quietly stopped interacting with your platform never see the survey — which means the population most likely to have a critical perspective on the experience is systematically excluded.

Embedded and In-Product Surveys

Embedded surveys appear inside a product interface, a portal, or a post-transaction communication. They reduce friction for short, targeted measures and tend to produce stronger completion rates when the question is specific and the context is clear. The constraint is format: embedded surveys aren’t suited to anything that requires context, skip logic complexity, or open-ended responses that need room to breathe.

Mixed-Mode Approaches

Using more than one collection method in the same program — email as the primary channel, phone follow-up for non-responders, for example — improves representativeness when a single mode leaves systematic gaps in the population. It also adds complexity. When you mix modes, the results aren’t directly comparable across channels without accounting for mode effects in the analysis. That’s a methodology decision that needs to be made upfront, not discovered when the data comes back looking inconsistent.

Survey Timing, Cadence, and the Over-Surveying Problem

When you ask shapes what you hear. A survey sent immediately after an interaction captures a different signal than one sent six months into a relationship. Neither is wrong — they’re measuring different things, and treating them as equivalent is where many programs go off track.

Post-transaction surveys have a narrow window. Too soon, and the customer hasn’t had time to process the experience. Too late, and the moment has passed and recall degrades. Relationship surveys operate on a different logic — they’re measuring the accumulated experience of working with your organization, not a specific touchpoint, so timing is about the survey cycle rather than the interaction.

Over-surveying is a real problem in B2B programs. Key contacts at strategic accounts are often already receiving surveys from multiple vendors. When yours arrives too frequently, or without clear relevance to their role, they stop responding — or they respond quickly and carelessly just to clear their inbox. That’s not data you can act on. Frequency decisions and data quality are connected in ways that don’t show up in the response rate.

Relationship vs. Transactional Survey Programs

Relationship surveys — typically annual or semi-annual — are designed to measure the health of the overall account relationship. They look at the company as a whole: how well the partnership is working, where competitive risks might be building, what would need to change to deepen the relationship. The output shapes strategic decisions.

Transactional surveys operate at the touchpoint level — after a repair, a support call, a delivery, a rep visit. The feedback is immediate, operational, and specific: what about this interaction could have gone better? Because the feedback is tied to a specific event, it can trigger a direct response. A score that indicates frustration can prompt a follow-up call the same week.

Strong programs use both, with a clean separation between what each is designed to measure and no conflation of the two in reporting. A transactional score trending down doesn’t mean the relationship is at risk. An annual relationship score holding steady doesn’t mean individual interactions are going well. Both data streams are useful; neither substitutes for the other.

Survey Invitations, Subject Lines, and the First Impression

The invitation is the first decision that affects whether someone opens the survey. It’s also where many programs spend the least effort, defaulting to whatever the platform auto-generates.

Subject lines should be specific, not clever. Recipients make an open-or-ignore decision in a few seconds, and a vague subject line signals a generic survey. “We’d love your feedback!” loses to “Quick question about your recent delivery from [CompanyABC]” every time, because the second one tells the recipient immediately that this is relevant to something that actually happened to them.

Sender identity matters more than most organizations realize. In B2B programs, a survey arriving from a named person — ideally someone the contact recognizes — carries more weight than one from a generic address. The sender is the first signal about whether this is worth the contact’s time.

Use a Reply-To Email Address

Using a named, reply-to email address (rather than a do-not-reply) allows you to hear from some customers who want to give feedback but don’t want to take the survey.

It also captures the list of auto-replies showing a customer has changed their email address.

Plus, it signals that you’re genuinely open to a response, not just collecting data in one direction.

Invitation copy should answer three questions before the respondent has to ask them: what is this about, how long will it take, and what happens with the feedback? Leaving any of those vague reduces response rates and trust simultaneously.

Survey Reminder Emails

One well-timed reminder typically adds meaningful response volume without damaging relationship perception. The timing and tone matter more than whether you resend the original or write fresh copy — though fresh copy tends to perform better with contacts who ignored the first email because the subject line didn’t land.

Know when not to send one. Senior contacts at strategic accounts, accounts with known relationship sensitivities, and programs that have already hit a representative response rate don’t need a reminder. Pushing for more responses when you already have what you need signals an automated process rather than a thoughtful one.

Anonymity, Confidentiality, and Survey Honesty

Anonymity and confidentiality are not the same thing, and treating them interchangeably creates a trust problem that affects how honestly people respond.

An anonymous survey means responses genuinely cannot be linked to the individual — not by the researcher, not by the client, not by anyone. In B2B programs with small account lists, true anonymity is rare. When only 12 people from a company took the survey and one of them is the VP of Operations, there’s often enough context in the responses to identify who said what, regardless of what the invitation says.

A confidential survey means responses are linked internally but won’t be shared in identified form. That’s the more realistic standard in B2B research — and it only works if respondents actually believe it. A confidentiality assurance buried in fine print doesn’t reduce social desirability bias. Being specific does: who sees the data, in what form, at what level of aggregation.

The deeper issue in high-touch B2B relationships is that even well-communicated confidentiality doesn’t fully overcome politeness bias. When a contact has worked with the same account manager for four years, the relationship feels more immediate than the privacy assurance. Structural solutions work better than disclosure language alone.

Working with a third-party firm (like Interaction Metrics) gives respondents the confidence to be honest.

When customers aren’t worried about retaliation or awkward follow-up, they say what they actually think. That honesty makes the data more accurate and the findings more actionable.

It also increases response rates: more customers are willing to participate when they trust the process is genuinely confidential.

Incentives — When They Help and When They Distort

Incentives increase response volume. They also shift who responds — contacts motivated primarily by a gift are a different group than those who respond because the topic matters to them. That distinction is worth keeping in mind as you design your program.

In B2B and high-value programs, the right incentive signals investment. A $25 Starbucks gift card, a quality power bank, a branded cooler (to name a few) are a way of saying: your time matters, and we’re not taking it for granted.

When relationships are high-value, the cost of a gift is a small price for the feedback and the goodwill it generates.

The risk isn’t incentives themselves — it’s the wrong incentive for the audience. A token Amazon card isn’t likely to move a senior decision-maker. But a thoughtful, tangible gesture, paired with genuine relationship context and the clear sense that their feedback will be used, is often exactly the right lever.

Incentives matter most in consumer panels, low-engagement populations, and research contexts where compensation is standard. In B2B, they work best when they’re positioned as appreciation rather than compensation — and when the rest of the data collection program is designed to reinforce that the feedback actually leads somewhere.

Data Quality During Collection — What to Control Before Analysis Begins

Data quality problems that start during collection can’t be fully fixed in analysis. They need to be anticipated, defined, and controlled while the survey is in the field — not discovered when the numbers look odd.

Duplicate responses are more common than most programs account for. A contact who receives a survey by email, opens it on their phone, and then completes it later on their laptop may appear twice in the dataset. Matching by contact ID, email address, or response pattern catches most of these — but the rule for which record to keep (first, last, or most complete) needs to be set before cleaning begins, not decided after the fact.

Incomplete responses require a similar policy decision upfront. Some partial completions are analytically useful; others aren’t. A respondent who answered 8 of 10 questions is different from one who answered 2. Defining “complete enough” before fielding ends means the cleaning process is consistent and defensible.

Straightlining — selecting the same answer for every item in a matrix — is a sign of disengagement. It inflates apparent consensus and pulls scores toward the middle. Flagging and reviewing straightlined responses before including them in analysis is standard practice in rigorous programs; skipping it is one of the reasons platform-default outputs often look more agreeable than they should.

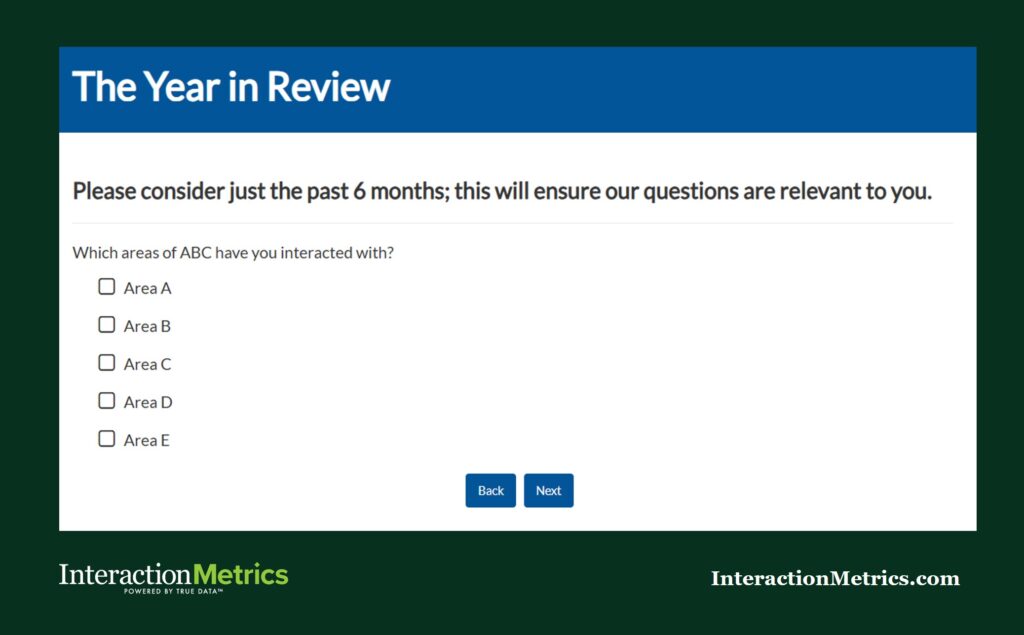

Out-of-scope respondents are a B2B-specific problem. When account managers influence who gets invited, contacts who lack meaningful experience with the measured touchpoints sometimes end up in the sample. A brief screening question at the start of the survey — “Did you interact with our technical support team in the past 6 months?” — routes those contacts out before their best-guess answers contaminate the data.

Platform and Technology — What the Methodology Requires

Platform choice should follow methodology requirements, not lead them. The question isn’t which survey tool has the most features — it’s which tool can execute the methodology you’ve designed without forcing compromises.

The capabilities that matter: skip logic and branching that can handle role-based routing, mobile optimization for contacts who won’t respond on a desktop, contact management that supports deduplication before fielding begins, reminder automation with enough flexibility to vary timing and copy, and data export clean enough to work with outside the platform’s own reporting environment.

What is Skip Logic?

Skip logic is the ability to route respondents based on what they’ve actually interacted with.

When a respondent selects which areas of your business they’ve engaged with, the survey can show them only the questions that are relevant to their experience — and skip everything else.

The result is a shorter, more relevant survey for the respondent, and cleaner data for you, because no one is answering questions about touchpoints they’ve never touched.

What to watch for: platforms that constrain methodology by limiting skip logic complexity, locking you into particular scale formats, or making third-party administration impractical. If the platform is shaping the survey design rather than supporting it, that’s the wrong platform for a science-first program.

Interaction Metrics works across platforms — Qualtrics, Alchemer, and others — because the methodology drives the tool choice, not the reverse. A platform is infrastructure. What you build on it is what determines whether the data is worth analyzing.

Special Considerations for High-Value and B2B Data Collection

In consumer programs, data collection is a logistics problem. In B2B and high-value programs, it’s a relationship decision. The choices you make about who gets invited, how they’re contacted, and what happens after they respond all communicate something about how seriously you take the accounts you’re trying to understand.

Multi-stakeholder access is one of the more practical challenges. A single account might have a procurement contact, a day-to-day operations user, and an executive sponsor — each with different vantage points, different tolerances for survey length, and different relationships with whoever is sending the survey. Routing each of them appropriately requires knowing who’s on the list and designing the outreach accordingly.

The account manager influence problem is worth naming directly. When account managers control who gets invited to a survey, they sometimes — consciously or not — steer away from contacts they’re uncertain about. That’s a sampling bias built directly into the collection process, and it produces data that reflects the accounts managers feel good about rather than a representative picture of the relationship. Independent administration removes that influence.

Closed-loop follow-up is a collection design decision, not just a post-analysis courtesy. In high-value B2B programs, a major account that received a survey and never heard anything back isn’t neutral — it’s a signal that the survey was extraction, not dialogue. Deciding how you’ll close the loop, and with whom, needs to happen before the survey goes out, because it affects how you design the survey, what questions you ask, and what data you need to have in hand when it’s time to follow up.

There are situations where a survey isn’t the right instrument at all. When the account relationship is particularly sensitive, when the topic requires real dialogue rather than a rating, or when the population within an account is too small for quantitative analysis to hold up, a structured interview gets you further. That’s not a fallback — it’s a legitimate methodology choice for the right context.

The Bottom Line

Data collection is where your methodology meets the real world — and where the gap between a well-designed program and one that produces trustworthy data becomes visible. Every decision in this stage, from the channel you choose to the way you clean what comes back, affects whether the findings are worth acting on.

Contact us to talk through what a more rigorous data collection approach would look like for your program.

Trusted by Companies Like Yours

Let’s Build the Right Survey for You!

Stop settling for surveys that fall short. Let’s build a survey that gives you honest answers, drives action, and accelerates growth.

"*" indicates required fields